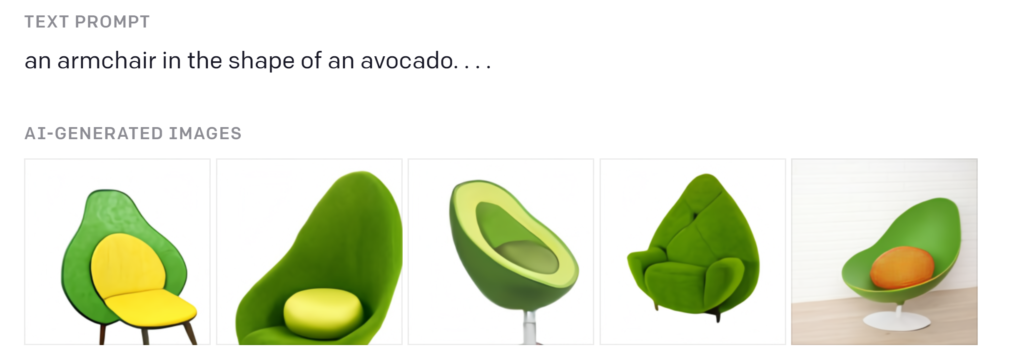

OpenAI has released DALL-E 3, the latest version of its text-to-image tool. DALL-E 3 is a significant improvement over the previous version, DALL-E 2, and is now able to generate even more realistic and creative images from text descriptions.

DALL-E 3 is a 12-billion parameter diffusion model trained on a massive dataset of text and image pairs. It can generate images from a wide range of prompts, including simple descriptions like “a red ball” or more complex and creative descriptions like “a photorealistic painting of a cat sitting on a moonlit beach.”

One of the most impressive things about DALL-E 3 is its ability to generate images that are both realistic and creative. For example, DALL-E 3 can generate images of people and objects in different styles, such as photorealistic, cartoonish, or abstract. It can also generate images of surreal scenes and concepts that would be impossible to create with traditional photography or editing software.

DALL-E 3 is still under development, but it has the potential to revolutionize the way we create and interact with visual content. It could be used for a wide range of applications, such as:

- Creating new forms of art and entertainment

- Designing products and services

- Developing new educational tools

- Generating realistic images for research and development purposes

OpenAI is currently making DALL-E 3 available to a limited number of users, but it plans to release the tool to the public in the future.

Here are some examples of the images that DALL-E 3 can generate:

- A photorealistic painting of a cat sitting on a moonlit beach

- A cartoonish drawing of a dog flying a plane

- An abstract painting of a tree

- A surreal image of a house floating in the sky

- A realistic image of a new product design

I am excited to see how DALL-E 3 is used in the future. I believe that it has the potential to make a significant impact on the way we create and interact with the world around us.